This guest post comes courtesy of Jason Bloomberg, managing partner at ZapThink.By Jason BloombergFor several years now, ZapThink has spoken about

SOA governance "in the narrow" vs.

SOA

governance" in the broad." SOA governance in the narrow refers to governance of the SOA initiative, and focuses primarily on the

service lifecycle.

When vendors try to sell you SOA governance gear, they're typically talking about SOA governance in the narrow. SOA governance in the broad, in contrast, refers to IT governance in the SOA context. In other words, how will SOA help with IT governance (and by extension, corporate governance) once your SOA initiative is up and running?

In both our

Licensed ZapThink Architect Boot Camp as well as our newer

SOA and Cloud Governance Course, we also point out how governance typically involves human communication-centric activities like architecture reviews, human management, and people deciding to comply with policies. We point out this human context for governance to contrast it to the technology context that inevitably becomes the focus of SOA governance in the narrow. There is an important technology-centric SOA governance story to be told, of course, as long as it's placed into the greater governance context.

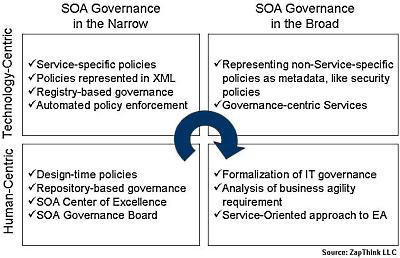

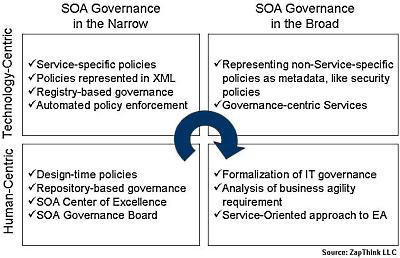

One question we haven't yet addressed in depth, however, is how these two contrasts -- narrow vs. broad, human vs. technology -- fit together. Taking a closer look, there's an important trend taking shape, as organizations mature their approach to SOA governance, and with it, the overall SOA effort. Following this trend to its natural conclusion highlights some important facts about SOA, and can help organizations understand where they want to end up as their SOA initiative reaches its highest levels of maturity.

Introducing the SOA governance gridWhenever faced with to orthogonal contrasts, the obvious thing to do is put them in a grid. Let's see what we can learn from such a diagram:

The ZapThink SOA governance grid

First, let's take a look at what each square contains, starting with the lower left corner and moving clockwise, because as we'll see, that's the sequence that corresponds best to increasing levels of SOA maturity.

1. Human-centric SOA governance in the narrowAs organizations first look at SOA and the

governance challenge it presents, they must decide how they want to handle various governance issues. They must set up a SOA governance board or other committee to make broad SOA policy decisions. We also recommend setting up a SOA Center of Excellence to coordinate such policies across the whole enterprise.

These policy decisions initially focus on how to address business requirements, how to assemble and coordinate the SOA team, and what the team will need to do as they ramp up the SOA effort. The output of such SOA governance activities tend to be written documents and plenty of conversations and meetings.

The tools architects use for this stage are primarily communication-centric, namely word processors and portals and the like. But this stage is also when the repository comes into play as a place to put many such design time artifacts, and also where architects configure design time workflows for the SOA team. Technology, however, plays only a supporting role in this stage.

2. Technology-centric SOA governance in the narrowAs the SOA effort ramps up, the focus naturally shifts to technology. Governance activities center on the registry/repository and the rest of the SOA governance gear. Architects roll up their sleeves and hammer out technology-centric policies, preferably in an XML format that the gear can understand. Representing certain policies as metadata enables automated communication and enforcement of those policies, and also makes it more straightforward to change those policies over time.

This stage is also when run time SOA governance begins. Certain policies must be enforced at run time, either within the underlying runtime environment, in the management tool, or in the security infrastructure. At this point the

SOA registry becomes a central governance tool, because it provides a single discovery point for run time policies. Tool-based interoperability also rises to the fore, as

WS-I compliance, as well as compliance with the

Governance Interoperability Framework or the

CentraSite Community become essential governance policies.

3. Technology-centric SOA governance in the broadThe SOA implementation is up and running. There are a number of services in production, and their lifecycle is fully governed through hard work and proper architectural planning. Taking the SOA approach to responding to new business requirements is becoming the norm. So, when new requirements mean new policies, it's possible to represent some of them as metadata as well, even though the policies aren't specific to SOA.

Such policies are still technology-centric, for example, security policies or data governance policies or the like. Fortunately, the SOA governance infrastructure is up to the task of managing, communicating, and coordinating the enforcement of such policies. By leveraging SOA, it's possible to centralize policy creation and communication, even for policies that aren't SOA-specific.

Sometimes, in fact, new governance requirements can best be met with new services. For example, a new regulatory requirement might lead to a new message auditing policy. Why not build a service to take care of that? This example highlights what we mean by SOA governance in the broad. SOA is in place, so when a new governance requirement comes over the wall, we naturally leverage SOA to meet that requirement.

4. Human-centric SOA governance in the broadThis final stage is the most thought-provoking of all, because it represents the highest maturity level. How can SOA help with the human activities that form the larger picture of governance in the organization? Clearly, XML representations of technical policies aren't the answer here. Rather, it's how implementing SOA helps expand the governance role architecture plays in the organization. It's a core best practice that architecture should drive

IT governance. When the organization has adopted SOA, then SOA helps to inform best practices for IT governance overall.

The impact of SOA on

enterprise architecture (EA) is also quite significant. Now that EAs increasingly realize that SOA is a style of EA, EA governance is becoming increasingly service-orientated in form as well. It is at this stage that part of the SOA governance value-proposition benefits the business directly, by formalizing how the enterprise represents capabilities consistent with the priorities of the organization.

The ZapThink take

The big win to moving to the fourth stage is in how leveraging SOA approaches to formalize EA governance impacts the organization's business agility requirement. In some ways business agility is like any other business requirement, in that proper business analysis can delineate the requirement to the point that the technology team can deliver it, the quality team can test for it, and the infrastructure can enforce it. But

as we've written before, as an emergent property of the implementation, business agility is a different sort of requirement from more traditional business requirements in a fundamental way.

A critical part of achieving this business agility over time is to break down the business agility requirement into a set of policies, and then establish, communicate, and enforce those policies -- in other words, provide business agility governance. Only now, we're not talking about technology at all. We're talking about transforming how the organization leverages resources in a more agile manner by formalizing its approach to governance by following SOA best practices at the EA level. Organizations must understand the role SOA governance plays in achieving this long-term strategic vision for the enterprise.

This guest post comes courtesy of Jason Bloomberg, managing partner at ZapThink.

SOA and EA Training, Certification,

and Networking EventsIn need of vendor-neutral, architect-level SOA and EA training? ZapThink's Licensed ZapThink Architect (LZA) SOA Boot Camps provide four days of intense, hands-on architect-level SOA training and certification.

Advanced SOA architects might want to enroll in ZapThink's SOA Governance and Security training and certification courses. Or, are you just looking to network with your peers, interact with experts and pundits, and schmooze on SOA after hours? Join us at an upcoming ZapForum event. Find out more and register for these events at http://www.zapthink.com/eventreg.html.